In recent months, we’ve been talking with funders (including private foundations, public foundations and funders, government, and corporate funders) about evaluation. We’ve learned that, by and large, they are very interested in evaluation and see it as an important tool for learning and action. They understand the challenges that nonprofits face around evaluation and they acknowledge that some of these challenges arise out of their own requirements and expectations. Like nonprofits, funders we spoke with in Ontario see the need for a better approach to nonprofit evaluation with more focus on collaboration, learning, and action. Many are already taking steps to achieve this, but most also acknowledge that they still have much to learn. During our conversations, we gained insight into the obstacles that funders must overcome in order to change their practices. Understanding these challenges may be one of the keys to building an evaluation strategy that is truly sector driven and action focused. That is the focus of this blog.

At each stage of our work, we have emphasized that the Sector Driven Evaluation Strategy really must be “sector driven.” That is to say, we have sought and continue to seek feedback on what we, as a sector, need in order for evaluation to be useful.

However, in our meetings and presentations to date, we have heard various versions of the following refrain from nonprofits: ”That’s great, but are you talking to funders too?” It’s an important point. We can’t create a more responsive and enabling evaluation ecosystem alone and we recognize that funders have a big role to play in the current system. In many ways, funders have been leaders in promoting evaluation.

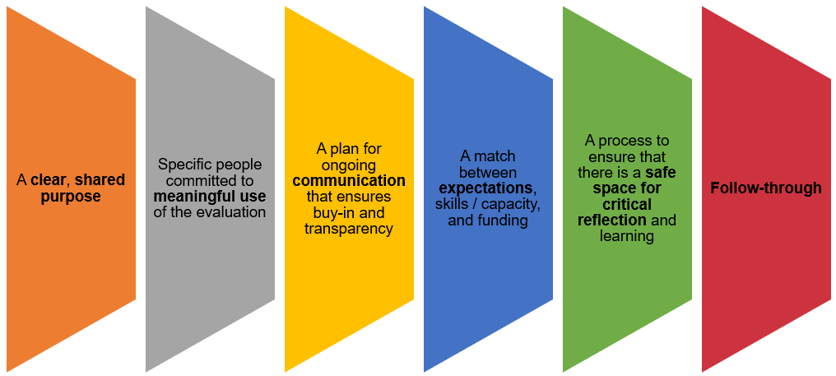

A key point we emphasized in our meetings with funders was that evaluation should be useful for everyone involved. The following graphic has helped us to frame the discussion that followed.

What Leads to a Useful Evaluation?

The research on evaluation is pretty clear. Evaluation is more likely to lead to action when the six factors below are carefully and deliberately thought through.

These factors are even more critical to determining a useful evaluation than how well a tool or methodology was implemented or how skilled staff were in collecting data. In essence, we wanted to raise with funders the need to focus more on how they work with grantees on these key factors as well as understand what needs and constraints they have.

Many of our conversations began by asking funders how they felt they were doing right now on each factor. From here, we were able to identify some common obstacles to improving evaluation practice among funders. These include:

1. Multiple layers of accountability. Most funding bodies are themselves accountable to someone else (e.g., donors, Boards of Directors, taxpayers, or higher levels of government) for how they disperse funds. As a result, there is often pressure on funders to make sure that their grants demonstrate impact and that the money was well spent. A few funders specifically noted that in many cases the questions they asked often had more to do with reporting “up the chain” to their own constituents than with learning.

2. Insufficient communication. All the funders we spoke to valued the opportunity to communicate with their grantees. Indeed, one of the most common things we heard was that the granting officer was often the biggest champion of his/her grantee within the funding organization. However, there was also an acknowledgement by some, that it can be tough to make communication feel like it is a mutual rather than a top-down, driven-by-funder conversation. Funders sometimes feel that they have to make do with less communication than they would like. At the same time, many funders spoke of wanting to have more engagement with their grantees and wanted nonprofits to know that they can and should ask questions.

3. Capturing complexity. Funders generally acknowledged that they don’t make full use of the evaluation data sent to them by grantees. Sometimes, this is an issue of technical skill and infrastructure. Like many nonprofits, funders are eager to find databases and other tools that make it easier to work with large amounts of data. Another part of this challenge has to do with distilling complex ideas into a form that is engaging and useful. Funders have to weave together evaluation data from many different kinds of organizations, highlight the ways in which their grants have contributed to this work, and show how different grants align with one another and with funding priorities. Even when funders do have the infrastructure to grapple with these questions internally, it can be a challenge to translate them into language that donors or taxpayers find useful.

4. Resource limitations. Generally, funders we have met have agreed that the ‘six factors” listed in our diagram are important. However, putting all six into practice can be very time consuming. Several funders noted the capacity challenges they face, particularly given the number of grantees they each have. The ideal may be to convene grantees, gather feedback on the purpose of the evaluation, provide more support to grantees along the way in terms of open communication, and ensure a safe space to talk openly and reflect. However, in reality, some funders simply do not have the resources to do all this.

5. Roles, relationships, and power dynamics. Many nonprofits are reliant on funders for their continued success and this sets up a power dynamic that can get in the way of good communication about evaluation. Most funders we have spoken to recognize this challenge. We heard from funders that they try hard to avoid overstepping their role and they try to negotiate evaluation expectations that are flexible and reasonable. However, it can be challenging as a funder to know when to step in and help and when to respect the autonomy of the grant recipient.

We came away from these conversations feeling that funders shared many of the concerns of the sector. While it is encouraging that funders are interested in engaging with us and hearing the concerns of the sector, there nonetheless is clearly a lot of work still to be done. The “six factors” diagram (above) is a good discussion starter and we welcome funders as well as nonprofits to continue to be part of the conversation with us.

This article originally appeared on the Ontario Nonprofit Network (ONN) website on July 21, 2016 and is re-posted here with permission